However, this modeĭoesn’t support any stride values other than 1. The irony is that tries to redirect to the function for more information, but (because it does not exist) it cannot link to it, as evident here. MaxPool2D,Flatten, Dense, Permute, GlobalAveragePooling2D from keras. The input so the output has the same shape as the input. When I search for the permute function (torch.permute) I can only find the method (). algorithms but is often used as a black box.

Image localization is an interesting application for me, as it falls right between image classification and object detection. Then see how to save and convert the model to ONNX. If the argument is rather large (say >10000 elements) and you know it is a permutation (09999) then you could also use indexing: def inversepermutation(perm): inv torch.emptylike(perm) invperm torch.arange(perm. Padding='valid' is the same as no padding. Follow part 2 of this tutorial series to see how to train a classification model for object localization using CNNs and PyTorch. KFrank s method is a great ad hoc way to inverse a permutation. Input – input tensor of shape ( minibatch, in_channels, i H, i W ) (\text, This operator supports complex data types i.e. torch is definitely installed, otherwise other operations made with torch wouldn’t work, too. Extending torch.func with autograd.Function.CPU threading and TorchScript inference.CUDA Automatic Mixed Precision examples.This function is equivalent to NumPy’s moveaxis function. On certain ROCm devices, when using float16 inputs this module will use different precision for backward. Each has been recast in a form suitable for. where star is the valid cross-correlation operator, N N N is a batch size, C C C denotes a number of channels, L L L is a length of signal sequence. There are two points where the dimensions of tensors are permuted using the permute function. This module implements a number of iterator building blocks inspired by constructs from APL, Haskell, and SML. Torch.moveaxis(input, source, destination) → Tensor Use of PyTorch permute in RCNN Ask Question Asked 2 years, 6 months ago Modified 2 years, 6 months ago Viewed 2k times 3 I am looking at an implementation of RCNN for text classification using PyTorch. Wiki Security Insights New issue Permutation of Sparse Tensor 78422 Open ignaciogavier opened this issue on 5 comments ignaciogavier commented on edited by pytorch-bot bot input ( Tensor) the input tensor.

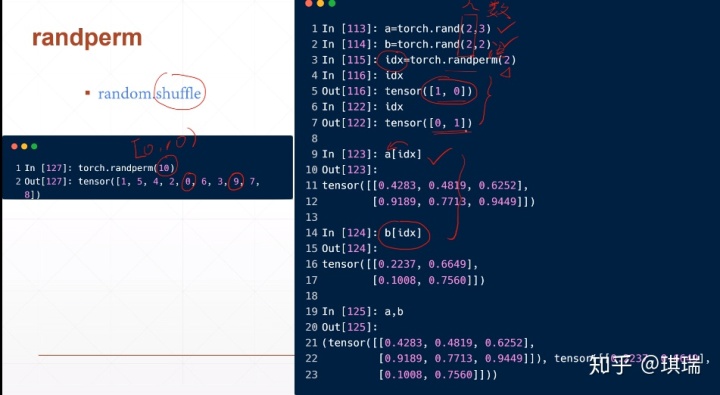

dtype ( torch.dtype, optional) the desired data type of returned tensor. Parameters: n ( int) the upper bound (exclusive) Keyword Arguments: generator ( torch.Generator, optional) a pseudorandom number generator for sampling out ( Tensor, optional) the output tensor. Additionally, it is important to consider the data type of the tensor, as this can affect the performance of the torch.moveaxis() function. Returns a random permutation of integers from 0 to n - 1. The Human Torch of the Golden Age and the Sub-Mariner, from Marvel Comics. When using these functions, it is important to consider the number of axes and their positions, as well as the shape of the tensor. Superhero fiction is a genre of speculative fiction examining the adventures. Additionally, the torch.movedim() function is an alias for the torch.moveaxis() function, and the torch.permute() function can be used to move axes to new positions while keeping the other axes in their original order. Modifications to the tensor will be reflected in the ndarray and vice versa. The returned tensor and ndarray share the same memory. I am guessing that you’re converting the image from h x w x c format to c x h x w. fromnumpy (ndarray) Tensor ¶ Creates a Tensor from a numpy.ndarray. It can be used to solve various problems such as rearranging tensors, reshaping tensors, and more. This is the issue the mask is 2-dimensional, but you’ve provided 3 arguments to mask.permute(). The torch.moveaxis() function in PyTorch allows you to move axes to new positions while keeping the other axes in their original order.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed